The Island of Monte Carlo

After making it through a challenging semester of 15-462/662, you take a nice long vacation on a tropical island. Unfortunately, on the way there you get caught in a storm that leaves you stranded on a deserted island with nothing but a coconut palm.

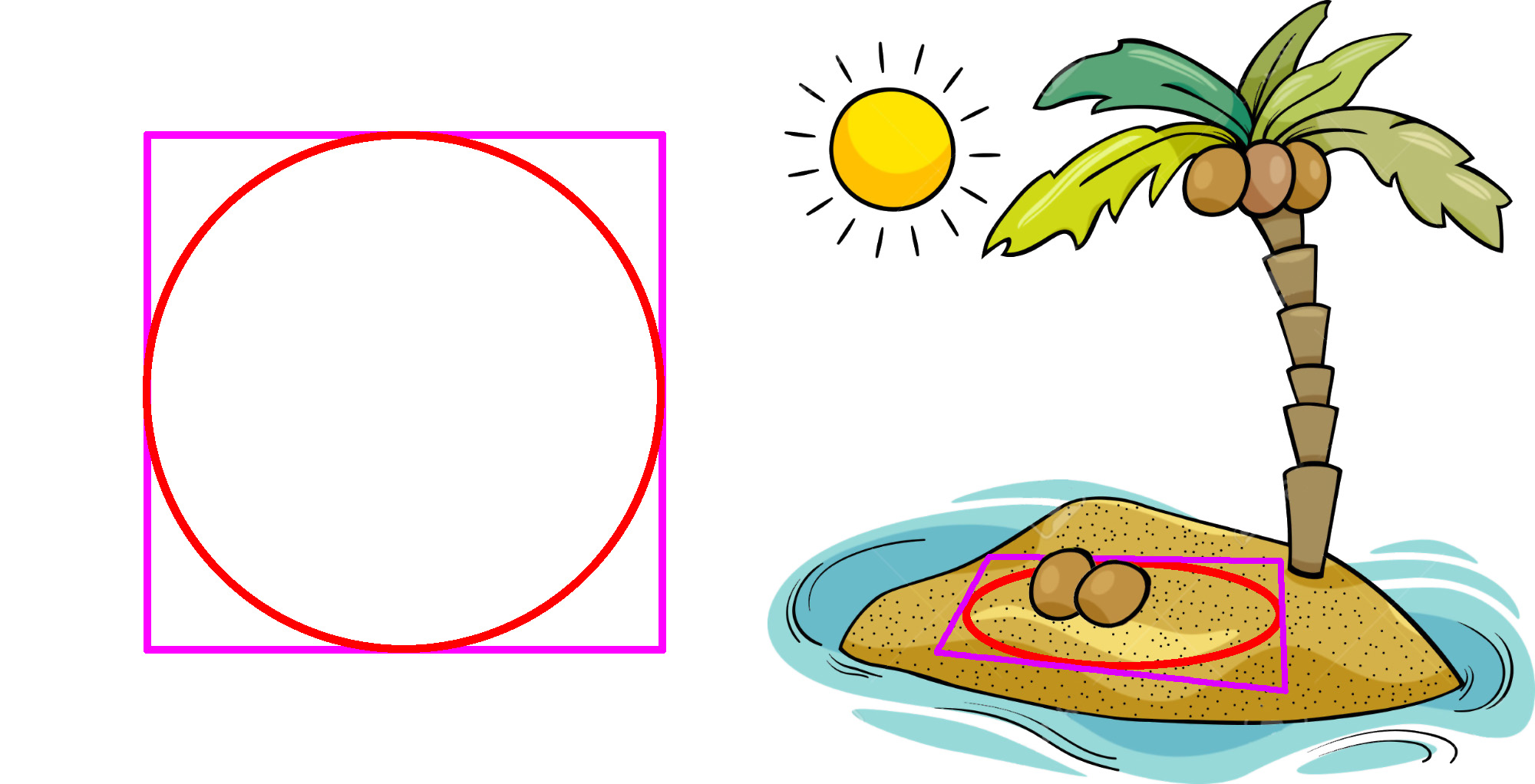

To pass the time as you wait for a rescue, you decide to use your newly-acquired Monte Carlo skills to estimate the value of pi. Using a stick and a little piece of rope, you draw a perfect circle and a square around it. You then climb high up into a tree and start tossing coconuts randomly down into the square. The fraction of coconuts that lands in the circle basically tells you the value of pi (though of course you must also account for the size of the square).

Implementing Monte Carlo

One of the reasons Monte Carlo is such a nice algorithm is its simplicity: you can get a basic estimator up and running with very little code. Write a short code snippet (in any language) that estimates the area of a circle, using the "coconut algorithm." Suppose nCoconuts is the number of coconuts you want to toss. You may assume you have a method randomReal(a,b) that returns a uniformly random value between a and b.

Running Monte Carlo

Let's now really run our code and see how well it estimates the value of pi!

In particular, run it for 1, 256, 6561, 65536, 390625, and 1679616 samples. To get a sense of how good the estimate is, print out each estimated value x as well as its relative error 1 – x/pi (which should be very close to zero for a good estimate). What do you notice?

Thinking About Monte Carlo

Why might the performance of Monte Carlo be so bad for the simple task of estimating the area of a disk? Is it the right tool for the job? Why or why not?

Regular Quadrature

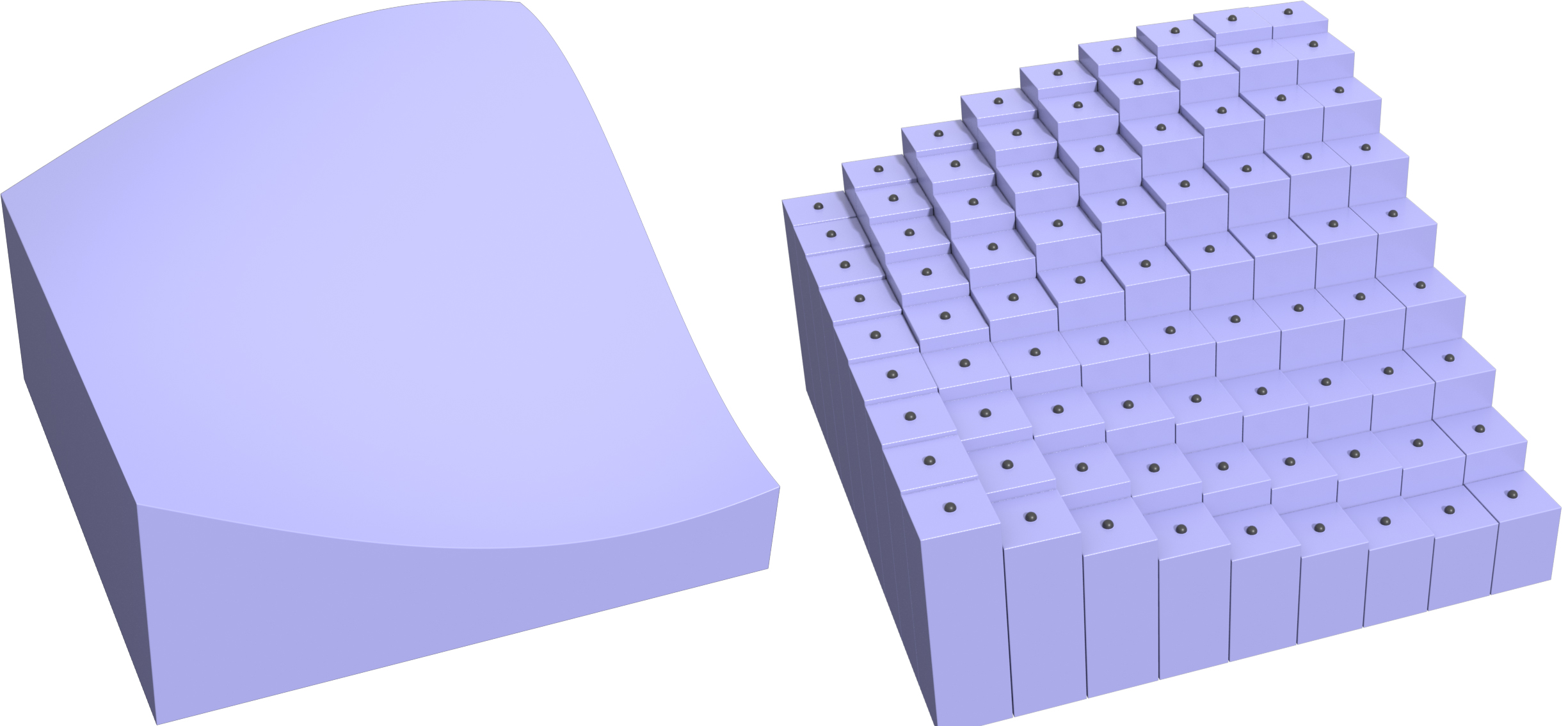

In the previous question, we saw that Monte Carlo is pretty inefficient at estimating the area of a disk. So, what else can we do? Let's go back to simpler, deterministic quadrature rules and see if they do any better. In particular, let's consider midpoint quadrature. For a function f(x) on a 1D interval [a,b], one-point midpoint quadrature estimates the integral as just the size of the interval times the function value at the its midpoint:

To make this rule more accurate, we can split the interval up into n equal-sized pieces to get the n-point midpoint quadrature

Where's the Middle?

How do you think we can generalize the 1-point midpoint rule to a multivariable function ?f(x,y), defined over the domain [ax,bx] x [ay,by]?? (where ax <= x <= bx and ay <= y <= by). How about a midpoint rule that uses more than one quadrature point? Describe your scheme in words, and give a formula.

Return to Monte Carlo Island

Back on Monte Carlo island, we're still throwing coconuts in the sand, waiting for pi to converge. Hope someone gets here soon. To see if we can make the time pass a bit quicker, let's replace the stochastic Monte Carlo scheme with our deterministic 2D midpoint scheme.

First of all, when we drop sample points (or coconuts) in a square in order to estimate area, what integral are we effectively computing? What are the integration bounds, and what is the integrand?

Implementing Regular Quadrature

Let's now translate equations into code (which is itself a valuable life skill!). Write a short code snippet (in any language) that estimates the area of a circle, using 2D midpoint quadrature. Suppose nX and nY are the number of quadrature points you want to use along the x and y axes, respectively.

Running Regular Quadrature

Let's again run our code and see how well it estimates the value of pi.

Run the midpoint quadrature code, letting nX=nY for values 1, 16, 81, 256, 625, and 1296. (Since the total number of samples is nX times nY, we'll end up with the same sample counts as in our Monte Carlo experiment.) Print out the estimated values for pi, as well as the relative error. What do you notice?

Thinking About Regular Quadrature

We've seen that both Monte Carlo and midpoint quadrature converge super slowly. But this seems nuts: all we're trying to do is estimate the area of a circle! If we can't do that efficiently, how can we ever hope to estimate (way) more complicated integrals using numerical quadrature?

What is the basic reason we're doing so much work for so little reward? [Hint: think about this question from the point of view of integration---where does midpoint quadrature need a lot of samples to be accurate, vs. very few samples?]

Beyond Regular Sampling

Adaptive Refinement

So far we've been sprinkling our samples uniformly over the domain (whether using Monte Carlo or midpoint quadrature), but obviously this isn't the best strategy in general: different integrands will have "interesting stuff" going on in some regions more than others.

What's a general strategy you could use to modify midpoint quadrature so that it focuses computational effort where it's really needed? [Hint: think back to some of our geometry lectures.]

Adaptive Area Estimation

A reasonable spatial data structure to use for our adaptive midpoint quadrature is a quad tree, where each quadrant of space is split into four equally-sized squares whenever a refinement criterion is met. What is a natural refinement criterion for integrating the area of a circle? I.e., how might we decide that a split is useful?

Implementing Adaptive Area Estimation

Suppose you've refined a quad tree according to the criterion from the previous question. How would you estimate the area of the circle? I.e., describe an adaptive midpoint quadrature rule.

Running Adaptive Area Estimation

Let's try it out---write a recursive method area( x0, x1, y0, y1, depth ) that estimates the area of a circle within a given rectangle from (x0,y0) to (x1,y1) using adaptive midpoint quadrature. You can assume a method f(x,y) that returns +1 for points inside the circle, and -1 for points outside the circle, and should stop at depth = 0.

Thinking About Adaptive Area Estimation

Now actually run your code and see how it behaves. Run it for a max depth of 1 through 8 and print out the estimated value of pi, as well as the total number of samples used (i.e., the total number of squares in your quad tree). How does this estimator compare to Monte Carlo and regular (non-adaptive) midpoint quadrature? [Note: in order to get a nontrivial answer you will need to invoke the method once per quadrant of the circle (otherwise all four corners will start outside the circle!)]

Adaptive Integration

So far this strategy seems pretty good, but what can go wrong with adaptive quadrature? Suppose we have a shape more complicated than a circle, given by a black-box function ?f(x,y). Will we always converge to the correct area as we increase the max depth? If not, is there a strategy that provides a 100% guarantee that we converge to within a given ? of the true area? What if we use regular sampling?

Thinking About Adaptive Integration

So far we have been adaptively estimating area---but we're not far at all from adaptively integrating a continuous function ?f(x,y) over a given domain (say, a square). Assuming we continue to use midpoint quadrature within each square of our adaptive grid, what's a reasonable refinement strategy for general-purpose integration?

Thinking About Numerical Integration

From these experiments it seems pretty clear that we should always use adaptive quadrature: we get a more accurate estimate with far fewer samples. But is it true that an adaptive approach will always be more efficient overall? When should we/shouldn't we use an adaptive strategy?